The Game

Gameplay Overview

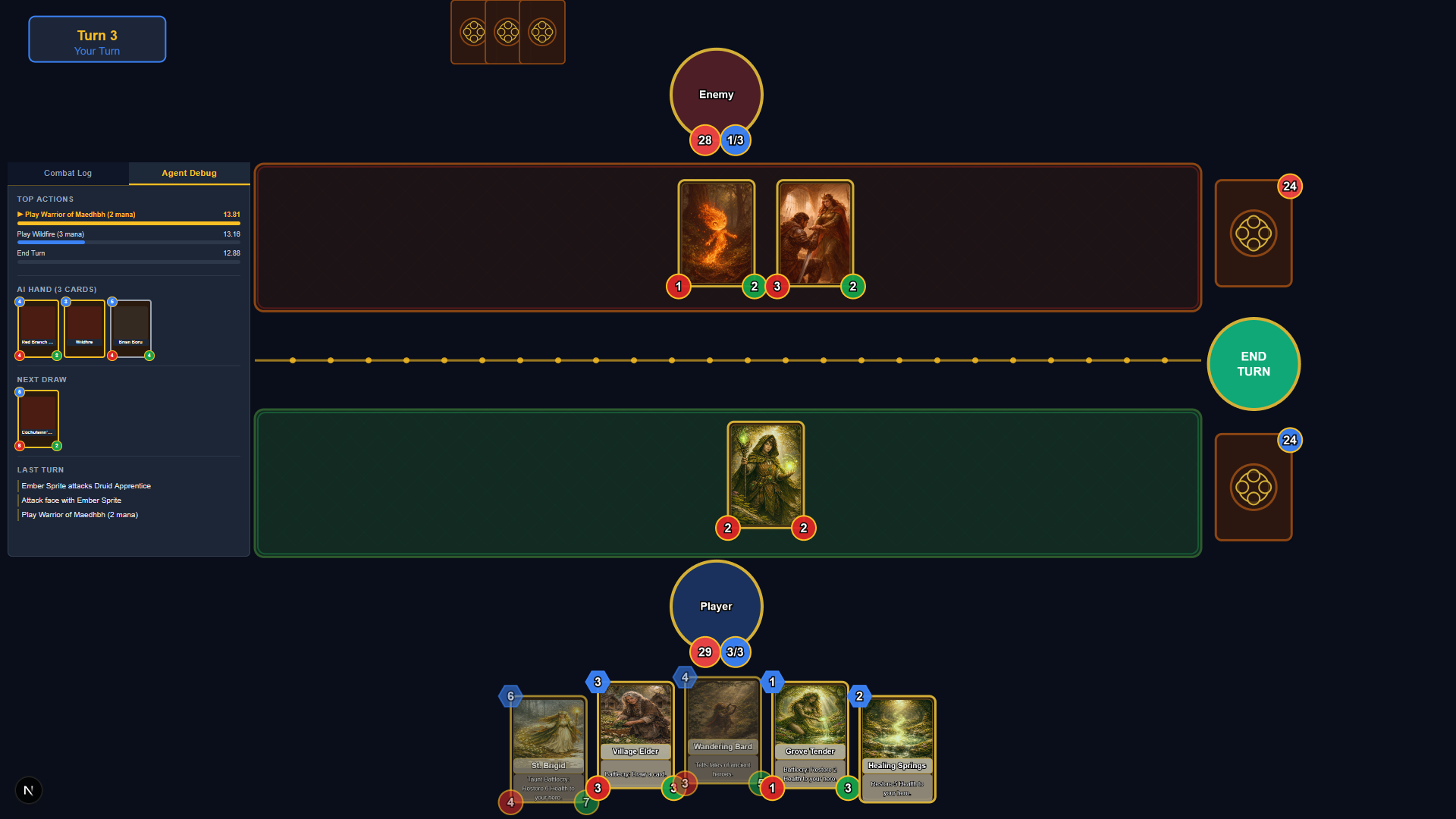

A single player card battle game where archetypes, resource management, and tactical decision-making decide the outcome.

Strategic Combat

Play minion cards onto the battlefield, trade favourably, and push for lethal damage. Every mana crystal counts; playing on curve wins games.

Taunt & Keywords

Taunt forces opponents to attack protected minions first. Charge allows immediate attacks. Battlecries and Deathrattles add depth to every turn.

Two Deck Archetypes

Aggressive fire-based tempo, or defensive earth-based survival. Both archetypes play to a distinct strategic identity.

Structure & Random Modes

Play curated 30-card structure decks or randomly generated decks from each element's full card pool; a different game every time.