Throttle, Brake and Steering Spoofing

These attacks inject crafted CAN messages to directly interfere with vehicle actuation. They demonstrate how malicious control commands can create conflict with normal autonomous driving behaviour.

Autonomous Vehicles · Cybersecurity · CARLA · ROS · CAN

This project presents a CARLA–ROS virtual testbed for evaluating CAN attacks, perception attacks, intrusion detection, and the effect of manipulated vehicle control and sensor data on autonomous driving behaviour.

Overview

Modern vehicles depend on internal communication networks such as the Controller Area Network (CAN). Although CAN is efficient and widely used, it lacks built-in authentication and encryption, which makes it vulnerable to spoofing, replay and denial-of-service attacks.

This project builds a virtual testbed to investigate those weaknesses in a safe and repeatable environment. CARLA is used to simulate autonomous driving, ROS manages the distributed system, SocketCAN provides a vehicle network layer, and a custom dashboard is used to trigger attacks and monitor system behaviour in real time.

Architecture

CARLA / Agent → Control → CAN Frames → Vehicle

↑ ↑

│ │

Sensor Safety Layer CAN Attack Node

↑ │

LiDAR / Camera IDS + Logger

Attacks

These attacks inject crafted CAN messages to directly interfere with vehicle actuation. They demonstrate how malicious control commands can create conflict with normal autonomous driving behaviour.

Legitimate CAN traffic is captured and can be replayed later in a different driving context. This shows how valid historical traffic can still be dangerous when reused at the wrong time.

A high-rate flood of control frames targets critical CAN identifiers, creating contention with legitimate traffic and disrupting availability of the control path.

A false obstacle is inserted into LiDAR-derived perception data before it reaches the safety logic. This causes the vehicle to slow or brake even when the road is clear.

A rule-based intrusion detection system monitors CAN traffic, identifies suspicious injected frames and high-rate flooding, and displays live alerts in the dashboard.

Attack activity, IDS events and CAN traffic are logged and displayed live, making the system suitable for demonstration, debugging and evaluation.

Results

The project highlights the difference between direct actuation attacks that manipulate vehicle commands and indirect perception attacks that influence driving decisions by falsifying environmental understanding.

Screenshots

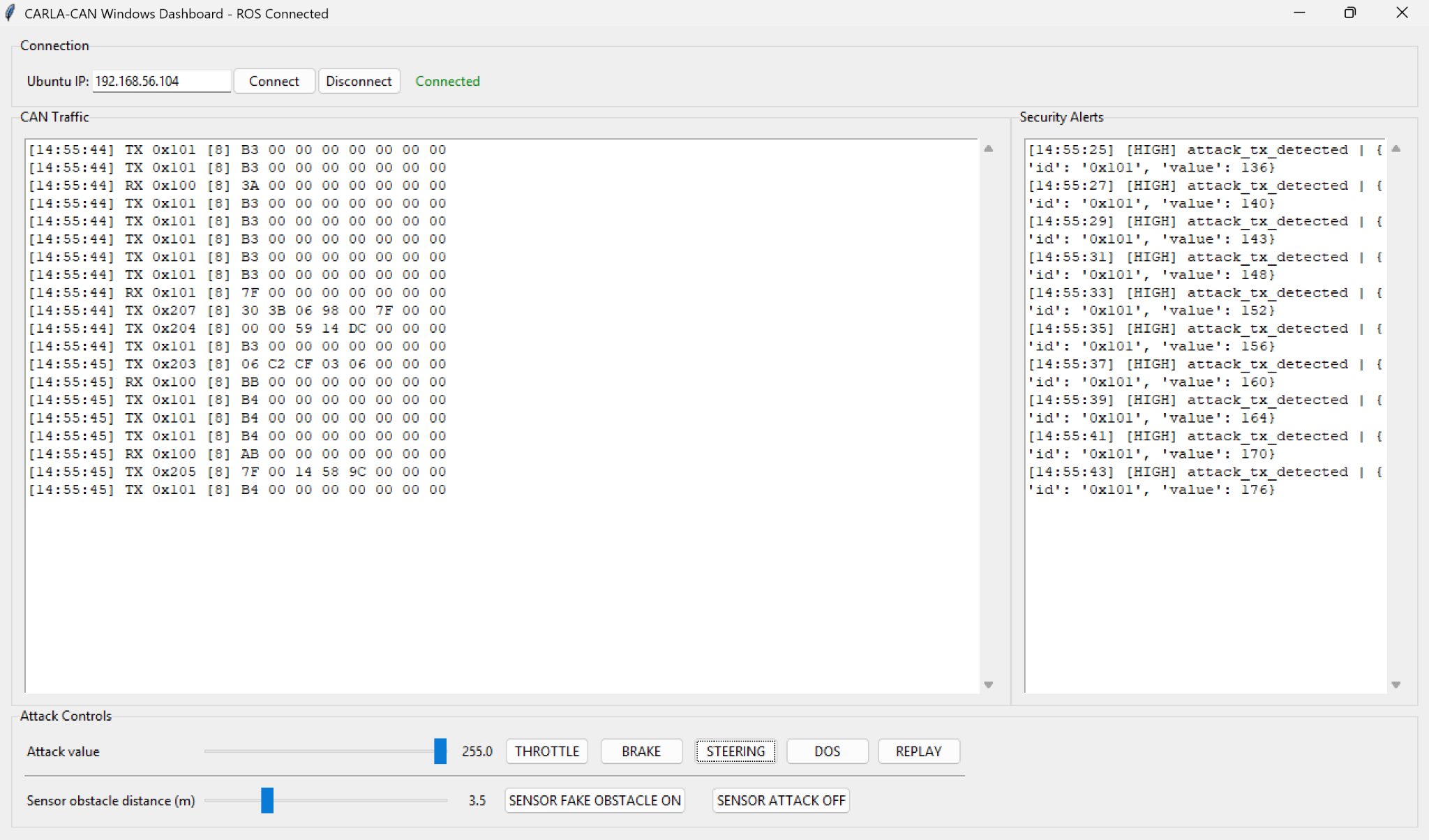

The custom dashboard displays CAN traffic, IDS alerts, connection status and attack controls for both CAN-based and sensor-based attacks.

The sensor attack injects a false obstacle into LiDAR-derived data, causing the safety state to switch to BRAKE even though the road ahead is clear.

Technology Stack