Running Sikraken On EC2 And ECS

The Problem

Sikraken is a research tool by Dr Christophe Meudec, which aims to generate test inputs in order to achieve branch coverage against a broad and well-established set of C code benchmarks curated by TestComp. Running Sikraken against a set of 206 benchmarks just once can take 3 Hours and 45 Minutes if run in parallel with 18 cores.

Approach

This project aimed to find the cheapest and fastest solution to run Sikraken against its benchmarks by exploring AWS' cloud computing services, Elastic Compute Cloud (EC2), Elastic Container Service Fargate (ECS), and AWS Batch. Pipelines have been created for each one and the Batch pipeline as seen below when used with EC2 spot instances proved to be the most efficient solution in which Sikraken can run against the same set of benchmarks in under 1 Hour and 10 minutes at approximately €1.80 on 60 vCPUs.

Evaluation & Comparison

Each architecture was evaluated based on execution time, cost, and operational complexity. All pipelines were tested using the same benchmark set to ensure a fair comparison.

| Architecture | Execution Time | Cost |

|---|---|---|

| EC2 (r5.xlarge) | ~12h 10m | ~€1.80 |

| ECS Fargate | ~1h 30m | ~€3.65 |

| AWS Batch (EC2 Spot) | ~1h 10m | ~€1.80 |

The AWS Batch implementation demonstrated the best overall performance, achieving the lowest execution time and cost. By leveraging Spot Instances and array jobs, it efficiently distributed workloads while minimising infrastructure overhead.

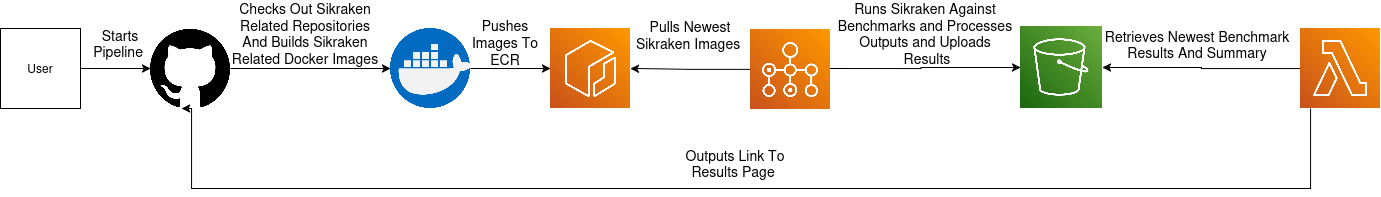

Architecture Diagram

Architecture Diagram

The pipeline is triggered by the user, initiating a checkout of all Sikraken-related repositories. Both Sikraken and the scripts to generate a report within a separate developer repository are then containerised into Docker images.

The newly built Docker images are pushed to ECR, making them available for execution. AWS Batch pulls these images and prepares a batch array job with an array size corresponding to a requested job count from the pipeline.

Multiple Sikraken containers are executed in parallel through the array job, running benchmark tests across the array job's indices. A Bash script inside the container executes the test run and uploads Sikraken's log files to an S3 bucket.

Once the Sikraken job completes, another container is run as a dependent Batch job to process the results. This container runs Bash scripts to generate performance reports, which are also stored in an S3 bucket.

Finally, an AWS Lambda function uses the Boto3 SDK to retrieve the newly generated reports from the S3 bucket and then create URLs pointing to where they're stored which are outputted to the user through the pipeline.