Baseline Risk

Direct prompt injection from user queries

Malicious user instructions can attempt to override intended behaviour before the model has any trustworthy context.

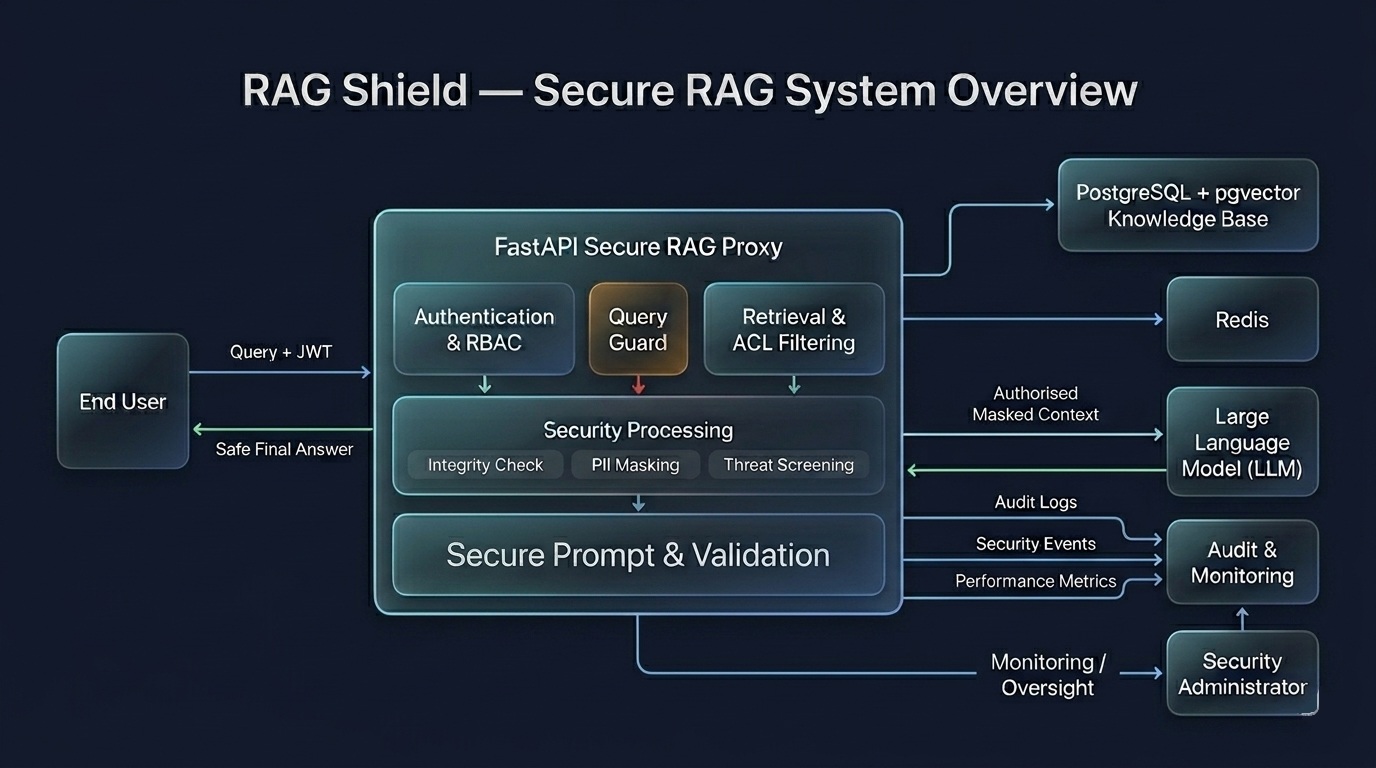

RAG Shield is a secure proxy for Retrieval-Augmented Generation systems. It enforces retrieval-time access control, blocks malicious inputs, sanitises sensitive context, and records auditable security events before data reaches the language model.

Malicious user instructions can attempt to override intended behaviour before the model has any trustworthy context.

Answer-bearing sensitive chunks may still be retrieved even when a lower-privilege user should not see them.

Without policy-aware filtering, the model may process more information than should be permitted for the request context.

Unsafe instructions hidden inside retrieved documents can influence the LLM if retrieval results are trusted blindly.